Robotic Arm Moves with a Glance... AI Smart Contact Lens Developed [Reading Science]

UNIST Combines 100 Sensors on Lens with Super-Resolution AI

Beyond XR: From Disaster Response to Medical Robot Control

An ultra-lightweight human-machine interface has been developed that allows a robotic arm to be moved simply by rolling one's eyes. This "smart contact lens" form factor has emerged as a next-generation wearable platform, as it can convert gaze information directly into robot control signals, offering an alternative to heavy and complex headset-type extended reality (XR) devices.

On April 15, a research team led by Professor Jeong Imdoo from the Department of Mechanical Engineering and the Graduate School of Artificial Intelligence at Ulsan National Institute of Science and Technology (UNIST) announced that they have developed a smart contact lens capable of remotely controlling a robotic arm with just eye movements. This was achieved by combining a process technology that directly prints optical sensors onto the lens surface with an artificial intelligence (AI)-based super-resolution signal restoration technique. The results have been selected as the cover paper for the latest issue of the international journal Advanced Functional Materials in the field of materials science.

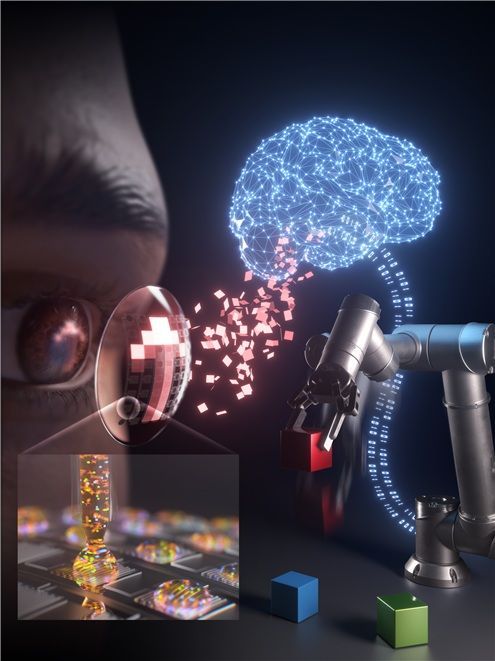

Conceptual diagram of a smart contact lens controlling a robot through eye movement. The contact lens's optical sensor reads the light distribution to estimate the gaze direction, and AI corrects it in high resolution to convert it into control signals for the robotic arm. The illustration shows the MPP technology applied to sensor printing. Provided by Professor Jeong Imdoo of UNIST.

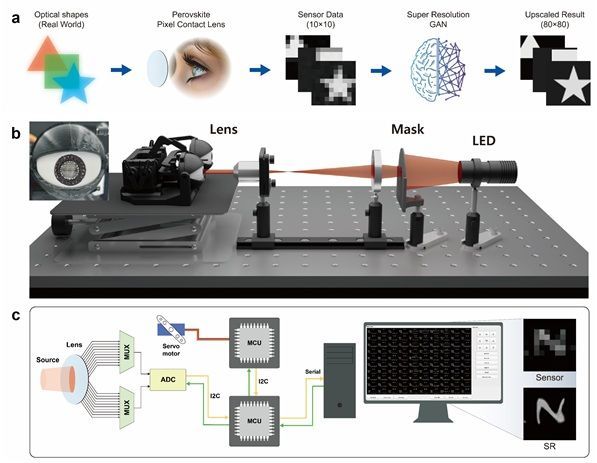

View original imageThe core of this technology is an array of 10×10 photodetector sensors integrated onto a contact lens. A total of 100 sensors read changes in the light distribution in real time according to eye movement, enabling tracking of the gaze direction. The system can precisely distinguish not only up, down, left, and right, but also diagonal directions. It also uses blinking as a separate command signal, enabling the robotic arm to perform actions such as grasping objects.

Overcoming Curved Lens Limitations with AI

The key achievement is that the team has simultaneously solved two major challenges that have hindered the commercialization of smart lenses: integrating sensors on a curved surface and achieving high resolution in an ultra-small space.

To achieve this, the research team devised a new process called "Meniscus Pixel Printing (MPP)." This method utilizes the surface tension of liquid at the nozzle tip to directly deposit sensor ink at desired positions on the curved lens. It enables customized sensor printing to accommodate various eyeball curvatures without the need for a separate mask, making it advantageous for personalized lens manufacturing. Unlike conventional flat-panel semiconductor processes, which suffer from distortion issues on curved surfaces, this approach allows for precise patterning to be completed within seconds.

Schematic Diagram of AI Smart Contact Lens System. a. Data Flow Diagram of AI-Based Super-Resolution Sensing System. b-c. Schematic Diagram of Testbed Configuration for Smart Contact Lens Validation. Provided by Professor Jim-Doo Jung, UNIST.

View original imageFurthermore, by combining AI-based super-resolution restoration technology, the team overcame the limitations of lens surface area. While the actual number of sensors is only 100, applying a super-resolution generative adversarial network (SRGAN) enables the restoration of high-resolution information equivalent to using up to 80×80, or 6,400, sensors. The inference time is only about 0.03 seconds, making virtually real-time control possible.

In particular, even under extreme conditions with the number of sensors reduced to 5×5, AI-based restoration improved the recognition accuracy of nine types of eye movements from 88.4% to 99.3%. In tests using an eyeball model, the system stably performed complex tasks such as grasping and moving objects with a robotic arm using only gaze input.

Professor Jeong Imdoo stated, "We have demonstrated the feasibility of an advanced human-robot interaction system that directly converts human visual information into robot control signals without a separate controller," adding, "This technology can be rapidly expanded to industrial remote robots, disaster exploration robots, defense unmanned systems, medical and rehabilitation assistive devices, and smart mobility interfaces."

Hot Picks Today

Applied Just for Skin Soothing...Study Finds It...

Applied Just for Skin Soothing...Study Finds It...

- "Only the Top 1% Winning Big in Stocks Smile... '300 Million Won Splurges' or '1...

- "Paying More Than the Listed Price?"... Academies Caught in the Act of Illicit T...

- "If You Pay, I'll Close the Case"... Former Korea Customs SJPO Who Took 145 Mill...

- "Please Launch It in Korea!" After All the Hype... This Coffee Finally Arrives i...

This achievement not only addresses the weight issue of XR devices but also significantly advances the practical implementation of "eye-based interfaces" for environments where hand use is difficult or for users with restricted physical movement. It is expected to become a new standard interface in fields requiring high-precision visual input, such as remote control in disaster sites, surgical assistive robots, and drone operation.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.

![Is This a Boom or a Recession? Queues for Both Luxury and Bargain Goods [K-Shaped Consumption Era]](https://cwcontent.asiae.co.kr/asiaresize/307/2026042114133834494_1776748418.png)