"AI Governance Is Key": PwC Consulting Presents Roadmap for the AI Basic Act

As a legal framework is being established to ensure the responsible use of artificial intelligence (AI), which has become a core element of corporate competitiveness, there is a recommendation that companies should go beyond mere regulatory compliance and proactively establish systematic and practical management systems tailored to the characteristics of their own AI services.

PwC Consulting announced on the 19th that it has published a report titled "Implementation of the AI Basic Act: What Should Companies Prepare For?" containing these insights.

The "AI Basic Act on the Promotion of AI and the Establishment of a Trust-Based Foundation" is set to take effect on January 22 of next year. The law aims to promote the sound development of AI technology and build social trust. It is scheduled to go through legislative notice next month, with the enactment of subordinate regulations to be completed within the year. South Korea is the second country in the world, after the European Union's "AI Act," to enact legislation related to AI.

According to the report, the core of the AI Basic Act is the introduction of the new concept of "high-impact AI." High-impact AI refers to AI used in 11 key areas, including energy supply and drinking water production, that may have or pose significant risks to human life, physical safety, or fundamental rights. Operators of high-impact AI are required to fulfill obligations such as prior review, advance notification, and the implementation of safety and reliability measures.

In addition, the AI Basic Act defines operators of high-impact AI, generative AI, and high-performance AI using large-scale computation as "AI users," imposing obligations of transparency and ensuring safety. The report identified three main challenges companies will face in responding to the AI Basic Act: ▲ the complexity of establishing an AI risk management system, ▲ the lack of management systems for AI training data and models, and ▲ ensuring the effectiveness of user protection measures. The report explained, "Responding to the AI Basic Act requires more than a purely technical approach; it necessitates structural changes across corporate strategy, organization, technology, and operations."

The report proposed the establishment of an "AI governance system" as a key solution. AI governance is not simply a means of technical control, but an enterprise-wide management framework that integrates corporate strategy, operations, compliance, and risk management. The report emphasized, "AI governance should serve as a tool to respond to external regulations and, internally, become a core competency that enables companies to strategically utilize and control AI technology."

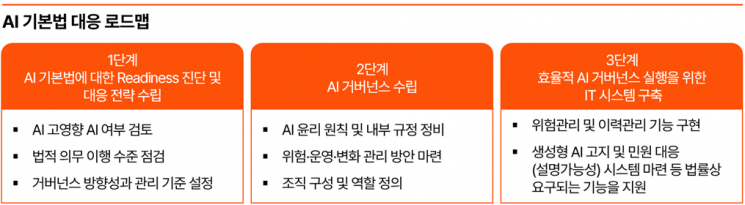

For an effective response to the AI Basic Act, the report suggested a three-step roadmap: ▲ assessment of readiness and development of response strategies for the AI Basic Act, ▲ establishment of an AI governance system, and ▲ implementation of IT systems for efficient AI governance.

Kim Jinyu, Head of the AI Trust Center (Partner) at PwC Consulting, stated, "With the implementation of the AI Basic Act approaching, many companies are contemplating their direction between innovation and regulation," adding, "We hope this report serves as a practical guideline for the responsible use of AI technology and for securing AI competitiveness based on trust."

Hot Picks Today

![Did Samsung and SK hynix Rise Too Much?... Foreign Assets Grow Despite Selling [Weekend Money]](https://cwcontent.asiae.co.kr/asiaresize/93/2026051416161763554_1778742977.png) Did Samsung and SK hynix Rise Too Much?... Foreign Assets Grow Despite Selling [Weekend Money]

Did Samsung and SK hynix Rise Too Much?... Foreign Assets Grow Despite Selling [Weekend Money]

- "Anyone Who Visited the Room Salon, Come Forward"… Gangnam Police Station Launches Full Staff Investigation After New Scandal

- "Wearing a Leather Jacket in 30-Degree Heat, Jensen Huang Enjoys Street Food as Beijing's 'Mukbang Star': 'It's Delicious'"

- "Drink Three Cups of Coffee and Stay Up All Night Before the Test"... Manual of Insurance Planner Who Collected 1 Billion Won in Payouts

- "Heading for 2 Million Won": The Company the Securities Industry Says Not to Doubt [Weekend Money]

The full report is available on the PwC Consulting website.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.