Even in Dim Lighting, Accurately Capturing Facial Expressions and Emotional Changes

KAIST Research Team Combines AI and Near-Infrared Light Field Camera

Emotion Changes Distinguishable with 85% Accuracy

[Asia Economy Reporter Kim Bong-su] A technology capable of accurately capturing facial changes and distinguishing emotions using artificial intelligence and advanced cameras even under low lighting conditions has been developed.

KAIST announced on the 7th that a joint research team led by Professors Jeong Ki-hoon and Lee Do-heon from the Department of Bio and Brain Engineering developed a technology that distinguishes facial emotional expressions by combining near-infrared-based light-field cameras with artificial intelligence technology.

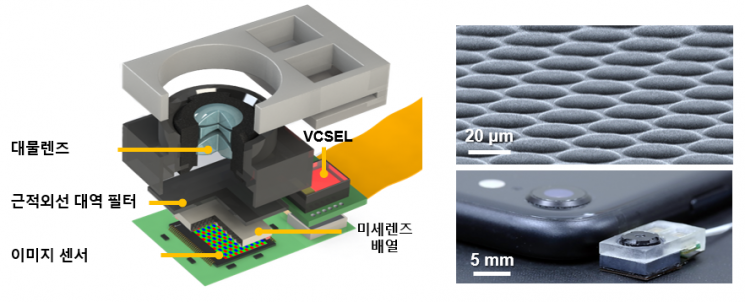

Unlike conventional cameras, light-field cameras insert microlens arrays in front of the image sensor, making them small enough to hold in one hand, but capable of acquiring spatial and directional information of light in a single shot. This enables various image reconstructions such as multi-view images, digital refocusing, and 3D image acquisition, making it a shooting technology attracting attention for its wide range of applications.

However, existing light-field cameras have limitations such as reduced image contrast and lower accuracy in 3D reconstruction due to shadows caused by indoor lighting and optical crosstalk between microlenses.

The research team applied a vertical-cavity surface-emitting laser (VCSEL) light source in the near-infrared range and a near-infrared bandpass filter to the light-field camera, solving the problem of decreased 3D reconstruction accuracy depending on lighting conditions in conventional light-field cameras. As a result, for external lighting angles of 0°, 30°, and 60° relative to the face front, the use of the near-infrared bandpass filter reduced image reconstruction errors by up to 54%. Additionally, by fabricating a light-absorbing layer that absorbs visible and near-infrared light between the microlenses, they minimized optical crosstalk and dramatically improved the contrast of raw images to about 2.1 times compared to previous levels.

Through this, they overcame the limitations of existing light-field cameras and succeeded in developing a near-infrared-based light-field camera (NIR-LFC) optimized for 3D facial expression image reconstruction. Using the developed camera, the research team was able to acquire high-quality 3D reconstructed images of subjects’ faces with various emotional expressions regardless of lighting conditions.

From the acquired 3D facial images, machine learning was successfully used to distinguish expressions, with classification accuracy averaging about 85%, statistically significantly higher than when using 2D images. Moreover, by calculating the interdependence of 3D distance information of the face according to expressions, the research team confirmed that the light-field camera can provide clues about what information humans or machines utilize when interpreting expressions.

Professor Jeong Ki-hoon explained the significance of the research, stating, "The ultra-compact light-field camera developed by our team is expected to be used as a new platform for quantitatively analyzing human facial expressions and emotions," adding, "It will be applied in fields such as mobile healthcare, on-site diagnostics, social cognition, and human-machine interaction."

Hot Picks Today

Even with High Oil Price Relief Payment, Additional 300,000 Won Per Person to Be Provided... Applications Open from the 18th in This Region

Even with High Oil Price Relief Payment, Additional 300,000 Won Per Person to Be Provided... Applications Open from the 18th in This Region

- "Invested 95% in Hynix and Reached 10 Billion Won"... Japanese Investor's Proof Post Goes Viral

- "Why Is the Korean Stock Market Surging?"... Even Italy Is Astonished by the KOSPI Rally

- "You Don't Need to Go to the Gym": The Best Exercises for Lowering Hypertension

- "That Thing Wakes Up Every Night" ... Suspicious Object Covers Rural Village

The research results were published online on the 16th of last month in the international journal Advanced Intelligent Systems.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.