High-Quality Text Expected to Run Out Soon

Artificial intelligence (AI) always seemed destined to become ever smarter. New models appeared each year, responses grew more natural, and AI quickly caught up to human capabilities. We have come to take AI’s progress for granted.

However, a different question has begun to emerge in the AI industry and research community. What happens if AI has nothing left to learn? Can the advancement of AI truly continue without end?

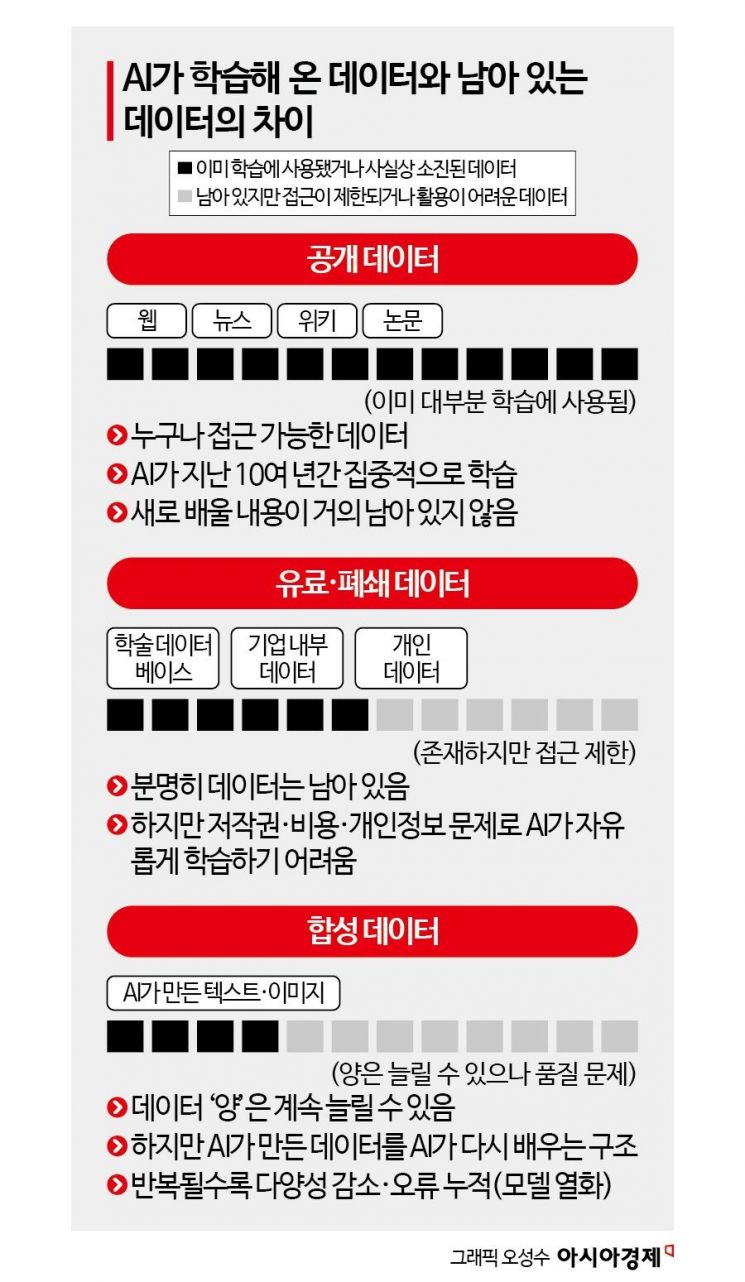

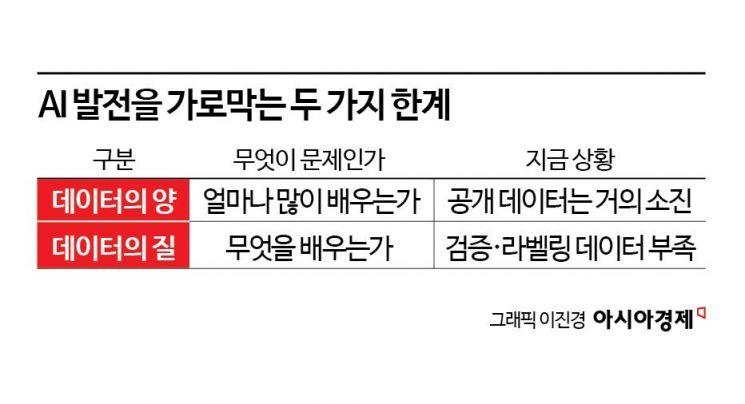

The starting point for this question is “training data.” AI does not experience the world on its own. It learns about the world through records left by humans-texts, images, videos, and audio data. The intelligence of AI is not determined by computational power alone, but is heavily influenced by the diversity and quality of the data it has learned from. Warnings are mounting that these learning resources are approaching their limits.

High-Quality Text Expected to Run Out Soon

Critical Weakness Leading to Model Collapse

Becoming Important Again

The 'Gold Mine' of the Internet Is Running Out: A Warning for 2026

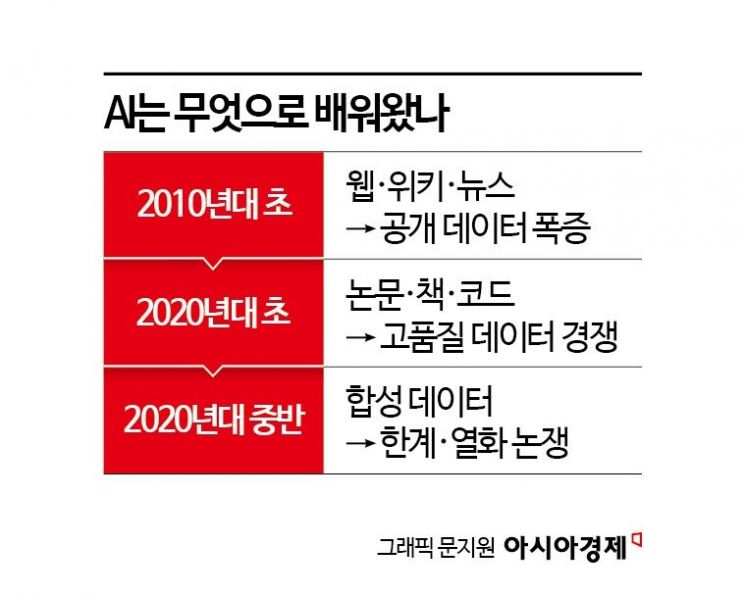

Until now, large language models (LLMs) have grown by relying on the vast troves of data available on the internet. Web documents, news articles, books, and academic papers have served as AI’s textbooks. However, a significant portion of high-quality, publicly accessible data has already been collected.

Epoch, a global AI research institute, recently warned in a report that the stock of high-quality text data generated by humans could be completely exhausted as early as between 2026 and 2030. Much of the remaining data is either strictly restricted due to copyright issues or is paid data that requires significant costs to access.

As a result, it has become virtually impossible for AI companies to continue “unauthorized mass collection” for training as they did before. Securing data has shifted from a matter of technological competition to one of enormous capital and legal battles. In fact, copyright lawsuits filed by major media outlets such as The New York Times and authors against companies like OpenAI symbolically illustrate the “data barriers” facing the AI industry.

Lee Seongyeop, professor at Korea University’s Department of Intellectual Property Strategy, said, “Large language models have already scraped most of the publicly available data on the web. Simply increasing the quantity of data now means mixing in redundant or reprocessed low-quality text, so the marginal utility for intelligence improvement is rapidly decreasing.”

He added, “Now, what’s needed is not just simple corpora, but data that is meticulously labeled with complex logical structures and human value judgments. However, the cost of producing and verifying such data is increasing exponentially.”

The Paradox of Synthetic Data: The Invisible Wall of 'Model Collapse'

The industry has turned its attention to “synthetic data” as an alternative to data scarcity. This approach involves using AI-generated text and images to train the next generation of AI. The idea is that if human records are lacking, AI can generate its own data and self-improve. However, this method has recently revealed a fatal structural flaw known as “model collapse.”

A joint research team from the University of Oxford, University of Cambridge, and University of Toronto published a paper in the journal Nature demonstrating that models repeatedly trained on AI-generated data forget the original data distribution and begin to produce incoherent output-an “intelligence regression”-in just a few generations. The researchers analyzed how AI, by treating statistically rare cases (outliers) as mere errors and removing them, rapidly erodes informational diversity.

This is akin to repeatedly photocopying a photograph until the image becomes unrecognizable-a phenomenon of “degradation” now occurring in the realm of intelligence. Ultimately, AI that relies solely on synthetic data becomes trapped in an “echo chamber,” endlessly reproducing only biased information.

Tech Giants Revise Their Strategies: Perspectives from Ilya Sutskever and Yann LeCun

This sense of crisis is clearly reflected in the statements of leading AI experts. Ilya Sutskever, co-founder and former chief scientist of OpenAI, recently remarked in a keynote speech, “We have almost mined out the gold of the internet, and simply scaling up will no longer be enough to reach the next level of intelligence.” This signals a shift in the AI race from the number of GPUs to exclusive data that others do not possess.

Yann LeCun, Chief AI Scientist at Meta, has also pointed out the fundamental limitations of text-based learning. In his books and academic lectures, he emphasizes, “Human children do not acquire intelligence by reading trillions of words, but by interacting with the physical world in real time.” He criticizes the current text-dependent training paradigm as one that ultimately leads to a “hallucination loop” disconnected from reality. LeCun argues for an architectural shift toward “world models” that can understand physical laws by learning from video and sensory data, beyond just text.

'Human Records' and Questions Regain Importance

The stagnation of AI learning does not signal a technological disaster, but rather a change in the “growth paradigm.” If the previous era was one where AI bulked up by absorbing massive amounts of human records, the future will be defined by the “quality” of data over its “quantity,” and by the creative records generated by humans-now a precious asset for AI’s survival.

Meticulously observed laboratory data, vivid field notes, and the complex moral and philosophical judgments that only humans can make are areas AI cannot synthesize on its own. For this reason, tech giants like Google and Microsoft are now investing astronomical sums not just in collecting data, but in hiring expert groups to create high-quality question sets to teach AI directly.

The next stage of AI will not be confined to machines. The answer still lies in the physical world where humans live, and in the primary data generated there. This moment, when it seems AI has nothing left to learn, is not a technological limitation, but a time for reflection on what humans should value, record, and leave behind. We are entering an era where the question is not what AI can do, but what kind of world we will choose to preserve as data.

IndexReading Science

- After AI, Now AR: Meta Scores Two Wins While Apple Struggles

- There Is Water That Can Turn Into Ice Even at Room Temperature

- "It Was Supposed to Rain, but It Was Sunny"... Is the Forecast Wrong, or Are We Misinterpreting It?

- When AI Has Nothing Left to Learn: Will Humans Become Unnecessary?

- Planting Immunity Inside Tumors...How Immune Oases Kill Cancer

![[Reportage]"It Only Leads to Arguments"... Chungnam's True Sentiments Hide Deeper After Martial Law](https://cwcontent.asiae.co.kr/asiaresize/304/2026051223490460082_1778597344.jpg)