"Simultaneous Reasoning with Text and Images"... LG Unveils Multimodal AI 'Exaone 4.5'

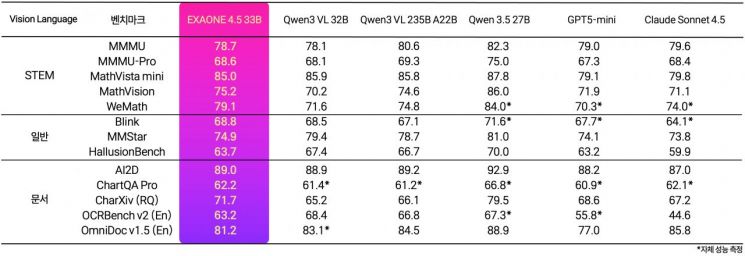

Average Scores Across 13 Visual Ability Assessment Metrics

Outperforms OpenAI GPT-5 mini

Surpasses Google Gemma4 in Coding Benchmarks

On April 9, LG AI Research unveiled "Exaone 4.5," a multimodal AI model capable of simultaneously understanding and reasoning with both text and images.

"Exaone 4.5" is a vision-language model (VLM) that integrates a vision encoder and a large language model (LLM) into a unified architecture. It is built upon the technological expertise accumulated by LG AI Research since the development of "Exaone 1.0," South Korea's first multimodal artificial intelligence (AI) model, in December 2021.

LG AI Research plans to embark on full-scale modality expansion once the third phase of the project is confirmed, following the conclusion of the second phase this August. Ultimately, the goal is to advance Exaone beyond virtual environments to become physical intelligence that can understand and make judgments about the real world.

"Exaone 4.5" excels at accurately reading and reasoning over complex documents commonly encountered in industrial settings, such as contracts, technical drawings, financial statements, and scanned documents. It scored an average of 77.3 points across five indicators measuring STEM (Science, Technology, Engineering, and Mathematics) performance, surpassing OpenAI's GPT5-mini from the United States (73.5 points), Anthropic's Claude Sonnet 4.5 (74.6 points), and Alibaba's Qwen3 235B from China (77.0 points).

Notably, in the LiveCodeBench v6 benchmark—a representative indicator of coding performance—Exaone 4.5 achieved 81.4 points, outperforming Google's latest model, Gemma4 (80.0 points). In ChartQA Pro, which evaluates the ability to analyze and reason over complex charts, it scored 62.2 points, demonstrating global competitiveness compared to peer models.

An LG AI Research representative explained, "Achieving high average scores on visual ability assessment metrics indicates that the AI possesses not only the capacity to recognize characters or unstructured data within documents, but also the comprehension needed to understand context and answer questions."

"Exaone 4.5" also delivered notable results in terms of efficiency. With 3.3 billion (33B) parameters, its model size is about one-seventh that of "K-Exaone," which was released at the end of last year, yet it achieved comparable performance in text understanding and reasoning. This was made possible by LG AI Research's proprietary hybrid attention architecture and high-speed inference technology based on multi-token prediction.

Jinshik Lee, Head of Exaone Lab at LG AI Research, stated, "Exaone 4.5 demonstrates that LG AI has entered the multimodal era, advancing beyond text to understanding visual information. Starting with this model, we will extend AI's understanding to speech, video, and physical environments, ultimately building AI that can make practical decisions and take action in industrial settings."

Hot Picks Today

"Even Luxury Cars Drive Off Without Paying"... ...

"Even Luxury Cars Drive Off Without Paying"... ...

- KCTU Walks Out Over Election of Kwon Soonwon, Who "Justified 69-Hour Workweek," ...

- Applied Just for Skin Soothing...Study Finds It Suppresses Antibiotic Resistance

- "Is the Starting Salary Really 4 Million Won?"... Surprise as Navy Salary and Sa...

- “Nothing Left to Protect” as Japan Drops Its “Peace State” Banner... Lifts B...

On this day, LG AI Research released "Exaone 4.5" on Hugging Face, a global open-source platform, for research, academic, and educational purposes.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.

![Is This a Boom or a Recession? Queues for Both Luxury and Bargain Goods [K-Shaped Consumption Era]](https://cwcontent.asiae.co.kr/asiaresize/307/2026042114133834494_1776748418.png)