Will Memory Shortages Worsen? OpenAI Unveils Patent for Chip With 20 HBMs

Chiplet Doubles Chip-to-Memory Distance

Aims to Maximize Capacity by Connecting 20 HBMs

As competition intensifies among artificial intelligence (AI) companies, there is a growing shortage of memory, a core component for AI systems. In this context, OpenAI, renowned for ChatGPT, has released a patent for a 'monster chip' that connects up to 20 High Bandwidth Memory (HBM) units, fueling expectations that memory shortages could become even more severe.

On April 9 (local time), OpenAI filed a semiconductor-related patent with the U.S. Patent and Trademark Office. The patent, titled "Compute Chiplet with Non-Adjacent HBM Chiplets, I/O Chiplets, and Embedded Logic Bridge," addresses the 'chiplet' technology, a post-processing method that assembles different types of chips into a single integrated unit.

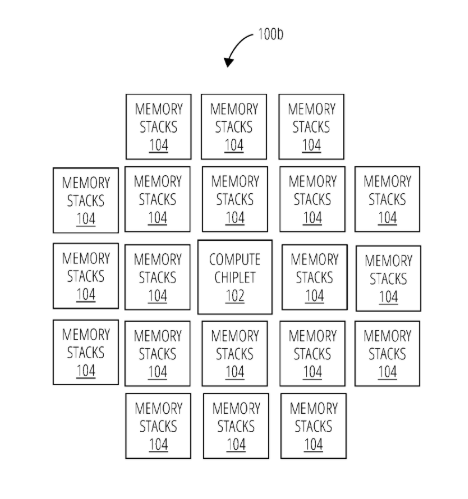

OpenAI's designed artificial intelligence (AI) chip. The central core chip is surrounded by high-bandwidth memory (HBM). OpenAI

View original imageThe chiplet itself is not a novel technology. Leading semiconductor companies such as Nvidia, Apple, Intel, and AMD already use chiplets to assemble multiple chips like Lego blocks and maximize performance. However, OpenAI's patent demonstrates a chiplet capable of connecting up to 20 HBMs.

Currently, Nvidia's graphics processing units (GPUs) connect 4 to 8 HBMs per chip to build external memory. If it becomes possible to attach 20 HBMs to a single chip, this would create a super AI chip with at least twice the storage capacity of Nvidia's GPUs.

Semiconductor companies have maintained a strategy of expanding memory by connecting HBMs around AI chips. However, there are physical limitations to such designs. The international standard set by JEDEC restricts the connection distance between chips and memory to 6mm to prevent signal attenuation (the phenomenon where communication signals become unstable as the distance increases).

OpenAI's chiplet patent incorporates an active circuit called an "embedded logic bridge," which greatly extends this connection distance from 6mm to 16mm. As a result, it becomes possible to "connect" HBMs even if they are not in direct contact with the AI chip. According to the design described by OpenAI, dozens of HBMs radiate outward from the central core chip, forming a cluster-like structure.

However, for this chip to be realized, there are numerous technical challenges to overcome beyond just the connection constraints. For example, the extreme heat generated when dozens of chips are connected simultaneously must be addressed.

Hot Picks Today

![Silently Climbing to the Top... Will Samsung Electronics Become the World's Most Profitable Company? [Why&Next]](https://cwcontent.asiae.co.kr/asiaresize/93/2026042707544140857_1777244081.jpg) Silently Climbing to the Top... Will Samsung El...

Silently Climbing to the Top... Will Samsung El...

- If You Had Followed, You'd Have Doubled Your Money... Presidential Fund Returns ...

- KOSPI Hits Record High as Korean Stock Market Capitalization Surpasses 6,000 Tri...

- "3,000 Cups of Coffee Despite Zero Revenue"... O-world Caf? Owner Supports Neukg...

- Lingering at the Olive Young Shelf, Then Straight Into the Basket... "Not Cosmet...

Meanwhile, since October last year, OpenAI has been working with U.S. semiconductor design firm Broadcom to develop custom AI chips. Reports indicate that OpenAI plans to equip its chips with Samsung Electronics' HBM4.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.