Robots Learn "Fingertip Sensation"... GIST Develops AI Technology for Precision Task Robots [Reading Science]

Core Technology for Humanoid and Manufacturing Robots

83% Success Rate in Gear Assembly and Cable Connection

An artificial intelligence (AI) technology has been developed that enables robots to learn the "sense of fingertip force" experienced when touching objects like humans, allowing them to perform precision tasks. Experts say this could become a core foundation for next-generation robot technology, where humanoid or manufacturing robots replace manual tasks performed by human hands.

On March 10, a research team led by Professor Gyubin Lee of the School of AI Convergence at Gwangju Institute of Science and Technology (GIST) announced that it has developed an AI technology that enables robots to learn the force and tactile sensations humans perceive when touching objects, allowing for precise manipulation tasks.

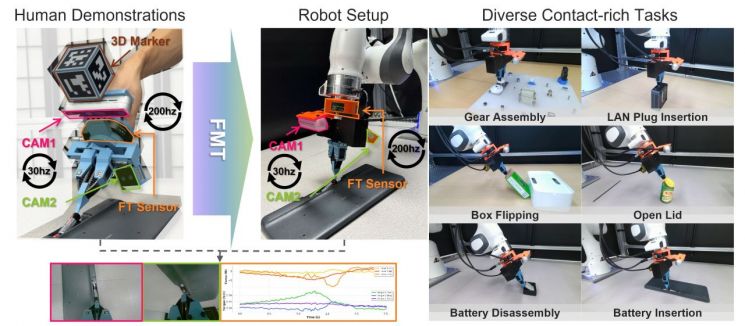

System Configuration Diagram of the ManipForce Hand Force Measurement Device. This device simultaneously records hand movements, forces, and task videos while a person manipulates objects directly. The system consists of two RGB cameras, a force-torque sensor (F/T) worn on the wrist, a 3D marker module for tracking hand position, and a robotic hand device for grasping and manipulating objects. Provided by the research team

View original imageConventional robot learning methods have mostly relied on "imitation learning," which uses only camera (RGB) information. As a result, they have struggled to perceive subtle resistance or momentary force changes that occur during processes such as fitting or assembling parts.

To address these challenges, the research team developed a technology that incorporates the "sense of force" humans naturally experience during work into robot learning.

The "ManipForce" hand strength measurement device developed by the team records a person performing manual tasks and simultaneously collects: 1) work videos captured by two cameras; 2) force and torque data measured by a wrist sensor; and 3) information on hand movements and position.

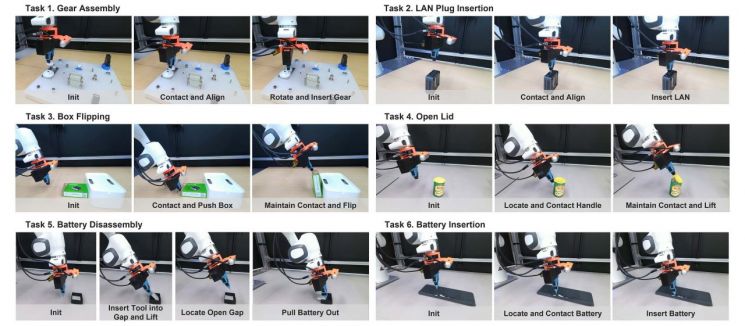

Comparison of Actual Task Success Rates. Robots equipped with the FMT achieved an average success rate of 83% across six contact tasks including gear assembly and battery insertion. This is significantly higher than the conventional method using only visual information, which had about a 60% success rate. Provided by the research team

View original imageThe system is designed to record video at 30 frames per second and force information at over 200 readings per second, enabling it to precisely capture not only visible scenes but also subtle forces that occur at the moment of contact. In addition, it applies 3D marker-based position tracking and a gravity compensation feature that eliminates the influence of the device's own weight, ensuring accurate measurement of forces that occur during actual contact.

The research team also developed the "Frequency-Aware Multimodal Transformer (FMT)," an AI model that learns from both video and force data collected in this manner.

Because video data is measured at 30 times per second and force data at over 200 times per second, there is a temporal gap between the two data streams. The FMT model is designed to analyze each data stream separately, then compare and integrate them for learning.

Through this method, robots can simultaneously understand the position of objects and the state of contact, enabling them to perform stable actions even in precision manipulation tasks that involve frequent contact.

Research team photo. From the left: Dr. Sungjoo Lee, Professor Gyubin Lee, PhD candidates Gunhyup Lee and Sangjun Noh, integrated MS/PhD student Kangmin Kim, senior researcher Seunghyuk Baek (Korea Institute of Machinery and Materials), and master's student Youngjin Lee. Provided by GIST

View original imageThe research team validated their approach with real robot experiments involving six tasks: gear assembly, box flipping, battery insertion, internet cable plug connection, lid opening, and battery removal. Each task was performed 20 times, resulting in an average success rate of about 83%. This is a significant improvement over the conventional method, which used only RGB camera video (about 20%).

Professor Gyubin Lee of the School of AI Convergence at GIST stated, "This research goes beyond the limitations of conventional robot learning, which relied solely on camera video, by presenting a new AI learning framework that utilizes force-sensing data. In the future, this can greatly expand the use of robots for various tasks requiring fine force control, not only in manufacturing settings for parts assembly or connector engagement, but also for household activities such as battery replacement."

Hot Picks Today

!["Stock Set to Double: This Company Smiles Every Time a Data Center Is Built [Click e-Stock]"](https://cwcontent.asiae.co.kr/asiaresize/93/2026050416112750184_1777878687.jpg) "Stock Set to Double: This Company Smiles Every...

"Stock Set to Double: This Company Smiles Every...

- "Is Yours Just Gathering Dust at Home? Millennials & Gen Z Rediscover Digicams O...

- "Continuous Groundwater Pumping Causes Mexico City to Sink 24cm Annually... 'Gia...

- "I Take Full Responsibility"... Seongjae Ahn Issues Direct Apology for 'Wine Swi...

- “She Shouted, ‘The Rope Isn’t Tied!’... Chinese Woman Falls from 168m Cliff ...

This research was supported by the Robot Industry Technology Development Project of the Ministry of Trade, Industry and Energy and the National Research Foundation of Korea. The results were pre-released on the international academic server 'arXiv,' and the paper is scheduled to be presented at the IEEE International Conference on Robotics and Automation (ICRA 2026), a leading conference in the field of robotics.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.