Kakao Brain Unveils 'RQ-Transformer'... AI Image Generation Speed Doubled

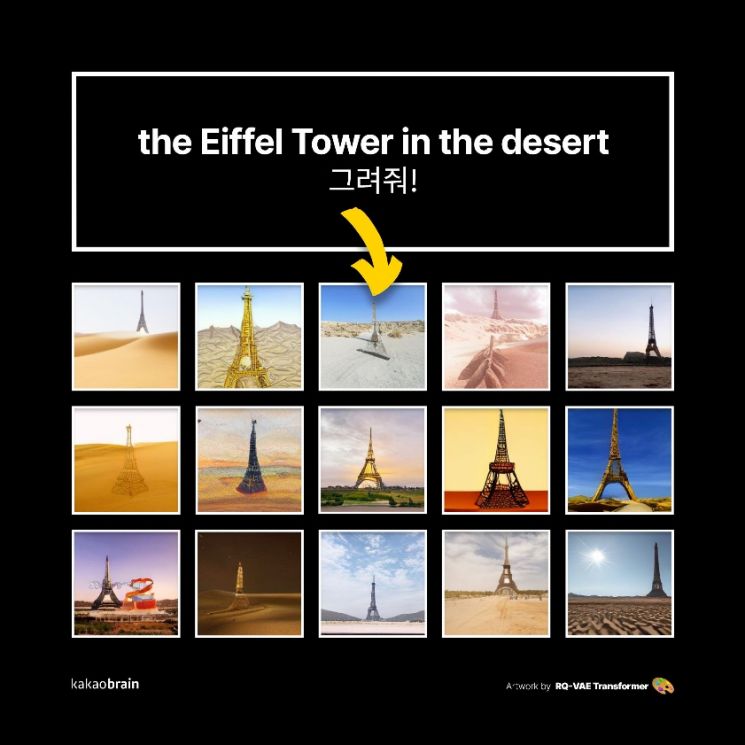

[Asia Economy Reporter Kang Nahum] Kakao Brain announced on the 19th that it has released the upgraded version of the ultra-large artificial intelligence (AI) image generation model ‘minDALL-E (Mindalli)’, called ‘RQ-Transformer’, on the largest open-source community GitHub1.

Composed of 3.9 billion parameters, RQ-Transformer is a 'text-to-image' AI model trained on 30 million pairs of text and images. It reduces computational costs and increases image generation speed while significantly improving image quality.

Compared to Mindalli, the model size is three times larger, and both the image generation speed and the size of the training dataset have doubled. Notably, while Mindalli was closer to reproducing 'DALL-E' released by the American AI development company OpenAI, RQ-Transformer was developed using Kakao Brain’s own proprietary technology.

Unlike existing technology that represents high-resolution images as two-dimensional codemaps, RQ-Transformer can sequentially predict and generate images represented as three-dimensional codemaps. Compared to existing technology, it features less loss due to image compression, allowing high-quality images to be represented with low-resolution codemaps. This enables lower computational costs and higher image generation speeds than conventional image generation models.

Additionally, based on large-scale datasets, it can understand combinations of previously unseen text and generate corresponding images.

Kim Il-doo, CEO of Kakao Brain, said, “Computers that create images according to human commands demonstrate technology that grasps and understands the intentions behind those commands. The groundbreaking text-to-image AI model we have released this time will be the first step toward a future where humans and computers freely communicate.”

Hot Picks Today

"Is Yours Just Gathering Dust at Home? Millenni...

"Is Yours Just Gathering Dust at Home? Millenni...

- "Stock Set to Double: This Company Smiles Every Time a Data Center Is Built [Cli...

- "Continuous Groundwater Pumping Causes Mexico City to Sink 24cm Annually... 'Gia...

- "I Take Full Responsibility"... Seongjae Ahn Issues Direct Apology for 'Wine Swi...

- “She Shouted, ‘The Rope Isn’t Tied!’... Chinese Woman Falls from 168m Cliff ...

Meanwhile, Kakao Brain plans to present a paper related to RQ-Transformer at the world-renowned academic conference CVPR 20222, scheduled for June.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.