"Samsung Has Many World Bests"... Jensen Huang Announces Collaboration with Samsung on Inference Semiconductor 'Groq 3' [GTC 2026]

"Groq3 chip in production, shipping in Q3"

Samsung: "Preparing for Feynman and other roadmap milestones"

New 'robotaxi' lineup including Hyundai

Rubin Ultra, optics... system expansion strategy

Jensen Huang, CEO of NVIDIA, unveiled the next-generation inference-specialized artificial intelligence (AI) accelerator 'Groq 3', developed in collaboration with Samsung Electronics, and announced that the chip will be produced at Samsung Foundry (semiconductor contract manufacturing) and will begin shipping in the third quarter of this year.

During his keynote address at 'GTC 2026', held at the SAP Center in San Jose, California, USA, on March 16, Huang stated, "Samsung Electronics is manufacturing the Groq 3 Language Processing Unit (LPU) chip for us. We are truly grateful to Samsung." He added, "The chip has already entered the production phase, and we are working to ramp up volumes as quickly as possible. We expect market shipments to begin in the second half of this year, around the third quarter."

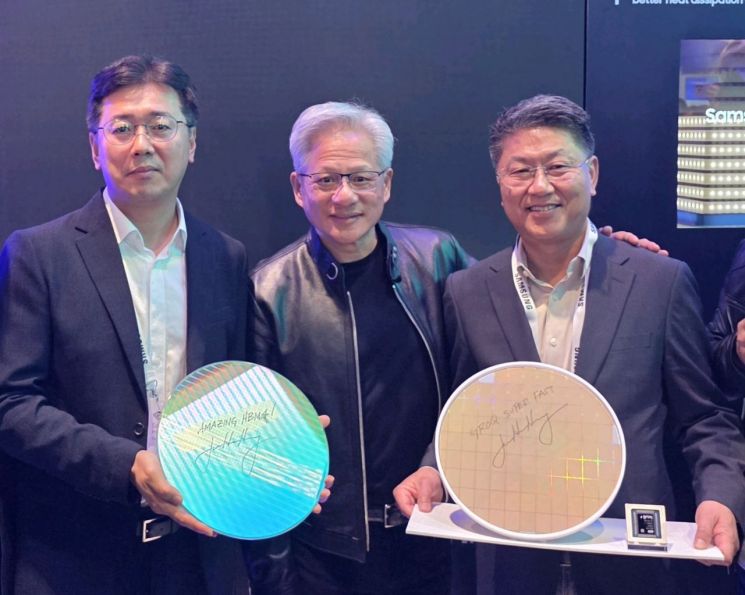

Jensen Huang, CEO of NVIDIA, Sangjun Hwang, Vice President in charge of Memory Development at Samsung Electronics (left), and Jinman Han, Head of the Foundry Business Division (right), are taking a commemorative photo at the Samsung Electronics exhibition booth at 'GTC 2026' held in San Jose, California, USA, on the 16th (local time). Samsung Electronics.

View original imageThe Groq 3 LPU is a semiconductor specialized for inference in running large language models (LLMs). Huang's comments publicly confirmed that Samsung Foundry is participating in the production of NVIDIA's next-generation AI chips, indicating ongoing collaboration between the two companies in the AI semiconductor supply chain. Notably, after Samsung Electronics became the first in the world to mass-produce and ship sixth-generation High Bandwidth Memory (HBM4) last month, it has now also taken on responsibility for foundry manufacturing processes, establishing a 'comprehensive AI partnership' encompassing both memory and foundry operations.

After his keynote, Huang visited the Samsung Electronics booth in person and signed collaborative products such as Groq 3 and HBM4, further strengthening the partnership. He examined Samsung's HBM4 and HBM4E wafers and finished products, as well as the Groq AI LPU chip foundry 4nm (1nm = one billionth of a meter) wafer, remarking, "Samsung has many world bests." He then took a commemorative photo with Jinman Han, President and Head of Foundry Business Division at Samsung Electronics, and Sangjun Hwang, Vice President in charge of Memory Development.

Samsung: "Preparing According to Roadmap, Will Supply Diligently"

Jensen Huang, CEO of NVIDIA, signed a 4-nanometer wafer of the Groq AI LPU chip foundry at the Samsung Electronics exhibition booth during the 'GTC 2026' held on the 16th (local time) in San Jose, California, USA. Samsung Electronics.

View original imageAccording to Samsung Electronics, the Groq 3 chip is currently in the sampling phase and is being produced at the Pyeongtaek plant. Initial orders have already been secured, and a significant increase in demand is expected from next year. Vice President Hwang told reporters, "Because the Groq 3 chip is large in size, demand for wafers will increase significantly. If NVIDIA aggressively expands demand, we will work hard to supply accordingly." The company also explained that since the same process used for Groq 3 is applied to the HBM4 base die, the expansion of AI demand will naturally lead to increased demand for advanced processes such as 4nm.

From a technology development perspective, collaboration between the foundry and design teams played a key role. As Groq is a startup with limited design personnel, Samsung Electronics reportedly participated in architecture implementation through its design support team. This is evaluated as a 'turn-key' collaboration model, involving not only manufacturing but participation from the design stage.

There is also attention on the possibility of continued collaboration with NVIDIA going forward. Samsung Electronics plans to expand its overall HBM supply by about three times compared to last year, with a strategy focused on increasing the proportion of high-performance products such as HBM3E and HBM4. Vice President Hwang stated, "With HBM4, the key challenge is not just capacity competition but simultaneously achieving performance and power efficiency," adding, "We are continuing to develop next-generation products based on advanced processes." Referring to the next-generation AI accelerator 'Feynman' mentioned by Huang during his keynote, he said, "We are preparing according to our customers' schedules."

"Data Centers in Space"... Hyundai Motor's 'Robotaxi' Also Mentioned

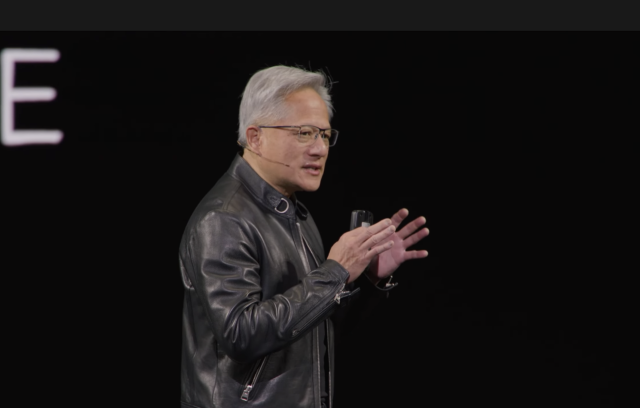

Jensen Huang, CEO of NVIDIA, is delivering the keynote speech at 'GTC 2026' held in San Jose, California, USA on the 16th (local time). NVIDIA.

View original imageNVIDIA also presented a vision to physically expand AI infrastructure into space. CEO Huang announced plans to build space-based data centers, leveraging experience in satellite-based computing. The company is developing a new system called 'Vera Rubin Space One', aiming to establish infrastructure for direct AI computation in space environments. Huang explained, "In space, there is no conduction or convection, only radiation, so the cooling method is completely different. New engineering approaches are needed to solve this."

The company is also ramping up its 'physical AI' expansion strategy focused on autonomous driving and robotics. On this day, NVIDIA mentioned new partners including major automakers such as Hyundai Motor, BYD, and Nissan. The company aims to expand the robotaxi ecosystem with these partners and accelerate the introduction of real-world services through its collaboration with Uber. With the annual production capacity of these companies totaling about 18 million vehicles, the size of the robotaxi fleet is expected to grow rapidly. At the same time, NVIDIA is collaborating with global robotics companies to implement AI-based robots on manufacturing floors and is working to establish the 'Aerial AI' platform, which encompasses telecommunications infrastructure. Huang emphasized, "The 'ChatGPT moment' of autonomous driving has arrived. Physical AI will be a turning point as it spreads across industries."

Expansion of Ultra-large Systems Incorporating Optical Technology

Jensen Huang, CEO of NVIDIA, is delivering the keynote speech at GTC 2026 held on the 16th (local time) in San Jose, California, USA. NVIDIA.

View original imageHuang also introduced 'Rubin Ultra', the next generation of the AI accelerator 'Vera Rubin' scheduled for mass production this year. Rubin Ultra is expected to further enhance performance with its new chip architecture, and NVIDIA plans to dramatically increase computing density in AI data centers through this advancement.

He unveiled a next-generation AI data center architecture roadmap, including Vera Rubin and Rubin Ultra, and presented a strategy to expand ultra-large-scale systems integrating GPUs, CPUs, and networks. Instead of simply boosting the performance of individual devices, the approach is to combine the entire system to maximize processing power. The key is a 'hybrid expansion architecture' that utilizes both copper-based connections and optical technology.

In particular, NVIDIA announced plans to expand its NVLink-based system from the existing NVL72 to a scale of up to 576 GPUs. As the number of connected GPUs increases, both computing speed and processing capacity rise simultaneously. To achieve this, the company is implementing a structure that combines copper interconnects with optical links. On the CPU side, the next-generation 'Grace' family processors, 'LP35' and the subsequent 'LP40', will be applied sequentially. The strategy is to further advance both the GPUs responsible for computation and the supporting CPUs, thereby enhancing the overall system performance.

Hot Picks Today

"Only Two Per Person" Garbage Bag Crisis Was Just Yesterday... Japan Also Faces Shortage Anxiety

"Only Two Per Person" Garbage Bag Crisis Was Just Yesterday... Japan Also Faces Shortage Anxiety

- "Samsung Electronics Employee with 100 Million Won Salary Receiving 600 Million Won Bonus... Estimated Tax Revealed"

- Lived as Family for Over 30 Years... Daughter-in-Law Cast Aside After Husband's Death

- 'Will Demand Finally Decline Due to High Prices?'... "I'll Just Enjoy Nearby Trips" as Japan and China See a Surge

- "Wore It Once, Then This? White Spots All Over 4.15 Million Won Prada Jacket... 'Full Refund Ordered'"

NVIDIA has already applied these technologies in production, and as AI infrastructure expands, the company plans to build an integrated ecosystem encompassing copper, optics, and Co-Packaged Optics (CPO). Huang stressed, "In the AI era, not only computing power but also connection capacity is a key competitive factor. Capacity expansion is necessary at the ecosystem level."

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.