'HBM Bottleneck' Deepens Amid AI Boom... Production Hampered by Advanced Process and Density Limits

Requires 2.5 Times More Investment Than Conventional DRAM

HBM4 Output Lower Than HBM3

AI Demand Surge Makes Closing Supply Gap Difficult

With the proliferation of artificial intelligence (AI), the supply of high bandwidth memory (HBM) is failing to keep up with demand, intensifying bottlenecks within the semiconductor industry. As the focus of AI semiconductor competition shifts from graphics processing units (GPUs) to memory bandwidth, the production limitations of HBM have emerged as a constraint across the entire industry.

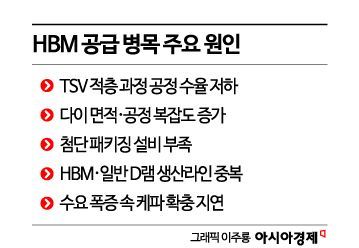

According to industry sources on November 10, the disruption in HBM supply is attributed to a combination of factors: a sharp increase in demand, high defect rates in the TSV (through-silicon via) stacking process, prolonged verification due to heat generation and signal interference, delays in securing advanced packaging equipment, and the parallel production structure with conventional DRAM. These complex factors are all contributing to the current situation.

Market uncertainty is also playing a role, leading some semiconductor companies to refrain from making additional equipment investments and instead focus on improving yield rates (the ratio of good products) within existing facilities. Across the industry, there is a prevailing trend of prioritizing process stabilization over short-term capacity expansion.

An industry insider stated, "The current pace of increase in HBM demand is faster than the typical rate of supply growth. Because the die size of HBM is roughly 2.5 times larger than that of other DRAM, increasing production speed to match that of conventional DRAM would require 2.5 times more production capacity investment."

On the 22nd of last month, visitors attending the "2025 Semiconductor Expo" held at COEX in Gangnam-gu, Seoul, are seen viewing SK Hynix's exhibition related to HBM4. Photo by Dongju Yoon

View original imageThis means that even when using the same production facilities, the output of HBM is significantly lower than that of conventional DRAM. Since each chip occupies a larger area, the number of chips that can be produced from a wafer of the same size decreases. Therefore, to increase production speed, factories, equipment, and material inputs must be significantly expanded, which is not easy in practice, resulting in delays in supply expansion.

HBM is manufactured using a TSV (through-silicon via) process, where multiple layers of silicon are stacked to allow electrical signals to pass through. During the stacking process, minute defects are likely to occur, leading to higher defect rates and longer times required to verify the quality of finished products. As a result, simply increasing the amount of equipment does not directly translate to a proportional increase in output.

The latest generation, HBM4, features an even more complex process. The number of input/output terminals has doubled compared to HBM3, making chip interconnections more complicated, and the gap between TSVs and bumps has narrowed, increasing the likelihood of minute defects. Manufacturers must secure extra space within the wafer to account for defective chips, further reducing the number of usable chips per wafer.

Ultimately, while the primary cause of the HBM bottleneck is the rapid surge in demand, it cannot be attributed to demand alone. According to industry analysis, the combination of low yield rates in the stacking process, lengthy verification procedures, and structural production constraints is preventing supply from keeping pace with demand.

The industry is strengthening verification processes to address issues such as heat generation and signal interference, but this creates a vicious cycle of slower production speeds. In addition, the need to produce both high-end HBM and standard low-end DRAM memory simultaneously is cited as another constraint on expanding production.

Securing advanced packaging equipment is another major hurdle. While GPU demand can be partially absorbed through foundry expansion, HBM production involves a complex process combining TSV stacking and advanced packaging, making immediate capacity increases difficult. Unless restrictions on the supply of key materials and equipment are resolved, the production capacity of HBM will inevitably remain limited.

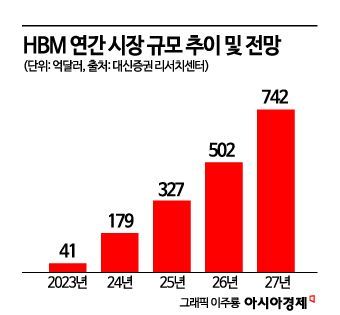

The industry forecasts that it will be difficult to close the supply gap in the short term. This is because the bottleneck in AI infrastructure is shifting from GPUs to memory. As the speed of AI model training and inference accelerates, the main performance constraint is moving from computing power to memory bandwidth and density. As a result, companies seeking to improve computational efficiency are placing the highest priority on securing high-performance HBM. Nvidia's next-generation GPU, Blackwell, will use HBM3E, while AMD has adopted HBM3 for its MI300 series. Cloud service providers such as Google and Amazon are also increasing their demand for AI chips equipped with HBM.

Major domestic companies are also accelerating their expansion efforts. Samsung Electronics has begun preparations for mass production of next-generation HBM4 at its Pyeongtaek campus in Gyeonggi Province, and SK Hynix has started similar preparations at its new M15X plant in Cheongju. However, most forecasts agree that closing the supply gap in the short term will be difficult. Even after building new lines for HBM, it typically takes six months to over a year to bring in equipment, stabilize processes, and verify yield rates. Furthermore, the limited availability of TSV and packaging equipment imposes physical constraints on the pace of expansion.

Hot Picks Today

Lee Jonghwan, professor of system semiconductor engineering at Sangmyung University, stated, "In the case of Samsung Electronics, if the yield rate for HBM3E can be improved, it is likely that a stable supply of HBM4 will be possible next year. Since profitability is strong, the company is expected to make all-out efforts to increase yield rates."

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.