[YouTube and Confirmation Bias]⑦ Should Platform Operators Be Left Unchecked?

Regulations exist abroad... Governments and judicial authorities can order content removal

Domestic experts also say, "Sanctions are necessary"

Is it inevitable to leave fake news and false or manipulated information circulating on social networking services (SNS) like YouTube unchecked? While the issue partly lies with individual political YouTubers using SNS, there is growing criticism that the platform operators providing these distribution channels should also be held accountable.

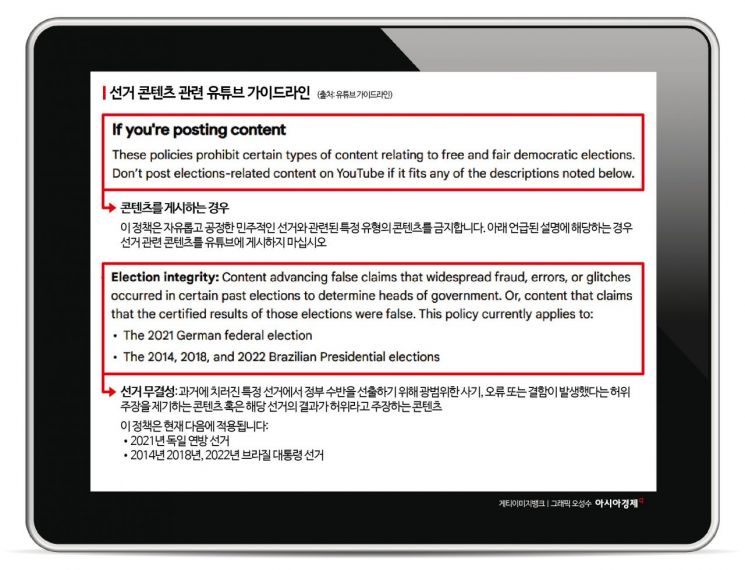

YouTube is the most frequently cited example. Although YouTube has established its own content moderation standards through community guidelines, it is difficult to say these are strictly enforced. YouTube’s own guidelines state that "channels or accounts may be terminated for repeatedly posting malicious, hateful, or personally attacking videos or comments." They also prohibit "links to content that encourages violent behavior by others" and "links to websites or apps that spread content causing serious harm, such as interfering with democratic processes."

There are even provisions for deleting inappropriate content or penalizing monetization. YouTube’s guidelines include a clause stating, "If a creator repeatedly encourages inappropriate behavior from viewers or exposes individuals to physical harm risks in local social or political contexts, content may be removed or other penalties imposed."

It is noteworthy that YouTube has already established an "election misinformation policy." This includes content related to election fraud. The guidelines state, "Do not upload content that promotes false claims of widespread fraud, errors, or glitches in specific past elections for government leaders, or that claims the results of such elections are false." The policy even specifies applicable election cases, such as the 2021 German federal election and the 2014, 2018, and 2022 Brazilian presidential elections?all of which faced allegations of election fraud. In Germany, there were claims that manipulated ballots affected the election. In Brazil, conspiracy theories spread mainly among supporters of former President Jair Bolsonaro, who in 2023 led riots that occupied the Brazilian Congress, Supreme Court, and presidential office.

Nevertheless, content containing extreme political claims continues to circulate on YouTube, and personal attacks against individuals from opposing political camps persist. Some YouTubers, anticipating sanctions against the "Super Chat" donation feature, display their bank account numbers as subtitles in their videos to solicit direct donations. Creators are finding their own detours to circumvent the nominal guidelines.

On December 19, 2024, in front of the Seoul High Prosecutors' Office building, lawyer Seok Donghyun, who is scheduled to defend President Yoon Seokyeol, met with reporters and expressed his stance on the investigation of charges of insurrection and the impeachment trial. YouTubers were live streaming the scene on their phones, and real-time comments were appearing on the screen. Photo by Heo Younghan

원본보기 아이콘Above all, it is problematic that individuals have difficulty responding when exposed to related content?whether understanding how they were exposed or how to filter it. On YouTube and most SNS platforms, the algorithm is considered a corporate trade secret. The more viewing time and content there is, the greater the profit, so YouTube continues to maintain a policy of keeping its algorithm undisclosed.

However, academia argues that YouTube’s algorithm is already biased and self-moderation has become impossible. The UK-based anti-extremism think tank Institute for Strategic Dialogue (ISD) reported that "regardless of the age or interests of a YouTube account, the algorithm ultimately recommends false or manipulated information, as well as extreme or sensational content," and "when set to a 14-year-old’s profile, YouTube recommended violent and sexual content even to the youth account."

Abroad, Platform Operators Are Held Accountable... Content Removal Is Possible

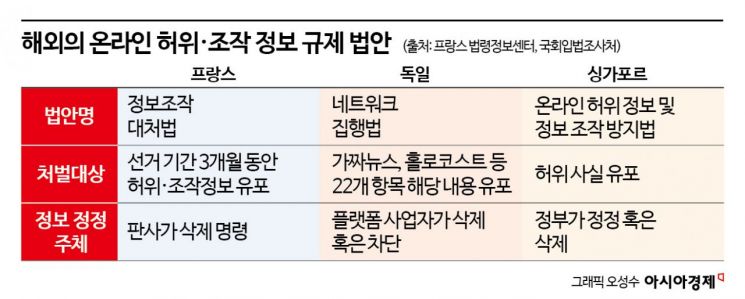

Internationally, there have been hearings to hold platform operators accountable and legislative moves to regulate platforms. France, after ongoing fake news controversies during the 2017 presidential election, enacted a law to counter information manipulation. The law stipulates that anyone who distributes false or manipulated information and fails to remove it can face up to one year in prison and a fine of 75,000 euros (about 100 million won). This applies from three months before the start of the election, and judges can order information to be deleted. The law also imposes an obligation on platform operators to provide transparent information to ensure fair elections.

In Germany, which is sensitive to hate speech, the "Network Enforcement Act" requires platform operators to delete posts containing fake news, Holocaust denial, or hate speech within 24 hours of discovery. If it is difficult to determine, but the content is found to be illegal, it must be handled within a week.

Singapore has also implemented the Protection from Online Falsehoods and Manipulation Act since 2019. The government holds the authority to correct or delete information, and if global operators like Google or Facebook fail to comply, they can be fined up to 1 million Singapore dollars (about 1 billion won).

In the United States, controversy has recently arisen after Mark Zuckerberg, CEO of Meta, announced the abolition of the platform’s fact-checking function. In 2020, the US Senate Judiciary Committee summoned Zuckerberg and then-Twitter CEO Jack Dorsey to demand responses to the spread of false or manipulated information on their platforms during the election period. At the time, they promised to implement protective measures and strong actions. Zuckerberg introduced fact-checking and hate speech regulation policies on Facebook and Instagram. However, after Donald Trump was re-elected, Zuckerberg announced the abolition of the fact-checking function, leading to criticism that "he ultimately bowed to Trump’s demands."

Domestic Experts: "Platforms Also Profit... Responsibility Must Be Imposed"

Domestic experts showed some differences in approach, but all emphasized the need to hold platform operators like YouTube accountable.

Choi Sangbong, professor of journalism and broadcasting at Sungkonghoe University, said, "Freedom of expression should be respected, so expressing opinions can be allowed as much as possible. However, if a fact clearly exists and is provable, yet someone continues to deny it, sanctions for clear fake news can be imposed." Professor Choi added, "Platform operators like YouTube should also be held responsible. Even when fake news is distributed, they share profits with channel operators, so they have no incentive to impose sanctions. If the government steps in to regulate, there will be strong backlash. Platform operators should take the initiative and strengthen self-regulation."

Lim Myungho, professor of psychology at Dankook University, also emphasized, "Extreme information is being disseminated without filtering, so sanctions are necessary," and "platforms should actively work on filtering measures."

However, there is also the view that imposing sanctions is fundamentally impossible. Nam Jaeil, professor of journalism and broadcasting at Kyungpook National University, said, "Unless a violation of existing law occurs, it is virtually impossible to regulate videos uploaded to YouTube or similar content," adding, "Ultimately, it is important for citizens and the media to improve their capabilities and for a healthy public opinion ecosystem to be formed."

![[Exclusive] "Looking Beyond Financial Statements for Future Value"... IBK Injects 170 Billion Won into 72 Innovative Companies](https://cwcontent.asiae.co.kr/asiaresize/304/2026011314545989464_1768283699.jpg)