Unable to Use Photoshop... How a Nordic Aesthetic Cosmetics Commercial Was Made in Just 30 Minutes

by Lee Eunseo

Published 28 Apr.2026 07:43(KST)

Updated 28 Apr.2026 10:52(KST)

Trying Out GPT Image 2.0

"Create a Commercial Video with Just a Few Words"

Vibe Coding Leads to the Rise of Vibe Image

"I'm going to create a video advertising a Nordic aesthetic cosmetics brand. Please generate a scene featuring a girl in a white dress adorned with floral decorations, reminiscent of the film 'Midsommar.'

A fictional cosmetics advertisement video created by a reporter using OpenAI GPT Image 2.0 and ByteDance's XiDance 2.0. Photo by Eunseo Lee.

원본보기 아이콘By describing an idea in natural language to artificial intelligence (AI), it became possible to create almost any video in a short amount of time. Without ever having used Photoshop or handled any video equipment beyond a smartphone camera, the reporter was able to produce a cosmetics commercial in less than 30 minutes, leveraging only their own design and directing skills. Over the course of an hour, they created two videos, spanning various genres such as commercial and animation. This marks the advent of 'vibe design,' allowing even non-experts to create videos like a director.

The people appearing in the video were generated with the help of OpenAI GPT Image 2.0. No complex technical jargon was necessary. By describing the character's image and clothing in detail via text or speech, or by attaching reference photos, it was easy to instruct modifications until the imagined photo was achieved.

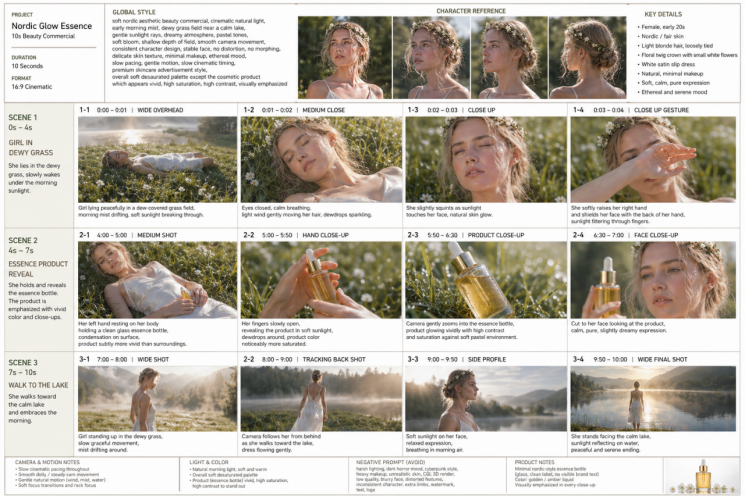

A reference guide for advertising videos created with GPT Image 2.0. Detailed cut-by-cut images can enhance the video quality. Photo by Eunseo Lee.

원본보기 아이콘Once the character's image was finalized, the large language model (LLM) was instructed to write the video prompts. Requests such as "ensure consistency from start to finish" were included. To maintain seamlessness in the video, elements like lighting, camera movement, and facial features had to be consistently connected throughout the scenes. After introducing the plan to create a 10-second video made up of 3 scenes, the contents of each scene-0-4 seconds, 4-7 seconds, and 7-10 seconds-were briefly described. Subsequently, by requesting "design continuous shot prompts and create a code block for each scene," prompts were generated that divided each scene by second, adjusting the character's movement, video speed, and color contrast for each segment.

In particular, it was possible to enhance the video quality by creating a reference sheet-a guide where prompt contents are converted into scene-by-scene images-to be attached to the video-producing AI using GPT Image 2.0. By inserting both the reference sheet and prompts into ByteDance's XiDance 2.0, the video was completed in just five minutes.

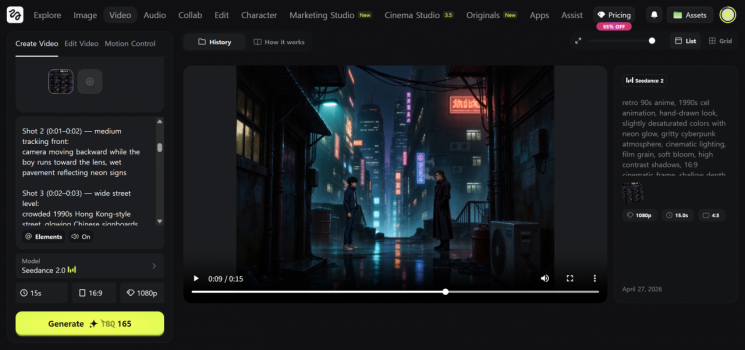

A 15-second animation video created using Synthance 2.0 on Hixfield, a design platform where AI design tools can be combined. After attaching prompts and reference photos on the left, the video is generated after about 5 minutes. Photo by Eunseo Lee.

원본보기 아이콘Effortless Image and Video Generation with a Few Words

On April 28, on the design platform Hixfield and social networking services (SNS), cases of creating commercials, cinematic videos, and game play videos using various AI design tools such as GPT Image 2.0 and XiDance 2.0 via prompts are spreading rapidly. Following 'vibe coding,' which involved writing code in natural language, 'vibe design'-where inspirations and ideas are explained in natural language and designs are produced in a short time-is becoming part of everyday life.

The common feature of Google's Labs' vibe design platform 'Stitch,' Anthropic's 'Claude Design,' and OpenAI's 'GPT Image 2.0,' all released publicly over the past month, is that they allow users to realize designs within minutes using ideas in various forms such as images, text, or code. Designers can now work through prompts without having to create separate initial sketches (wireframes) visualizing planning intentions or screen structures.

Users must provide the first and last scenes so the protagonist's subtle features remain consistent throughout the video, and write prompts by dividing scenes by the second to naturally direct the lighting. At this point, the 'consistency maintenance' feature built into AI enables non-experts to produce high-quality results. Google Labs recently open-sourced a file format called 'DESIGN.md,' which allows users to maintain the same design style across multiple tasks as they proceed with projects. Anthropic's Claude Design also analyzes the codebase to build a design system and automatically applies color, font, and design functions (components).

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.