"When AI Should Say 'I Don't Know': Identifying the Cause of Overconfidence and Boosting Reliability"

by Jeong Ilwoong

Published 27 Apr.2026 08:56(KST)

Excessive confidence in artificial intelligence (AI) is a risk factor that must be carefully monitored, especially in areas such as autonomous driving and medical diagnostics. If an AI system fails to admit what it does not know, and instead provides incorrect or ambiguous answers, it may actually cloud the user's judgment. A domestic research team has proposed a solution to enhance AI reliability by enabling AI to recognize situations where it lacks knowledge and thereby reduce overconfidence through learning.

KAIST announced on April 27 that the research team led by Distinguished Professor Se Beom Baek of the Department of Brain and Cognitive Sciences has identified "random weight initialization" as the fundamental cause of AI overconfidence. As a solution, the team devised a "pretraining" strategy that briefly trains the neural network using "random noise."

Random weight initialization is a method in which weights are randomly set according to a probability distribution at the start of neural network training. It is widely used in "deep learning," which involves training data using artificial neural networks. Random noise refers to meaningless, arbitrary input data.

The research team noted that the issue of AI overconfidence emerges not only after training but also from the very beginning, at the initialization stage. They observed that even when a neural network is randomly initialized and has not learned any content, it can still exhibit high confidence when given arbitrary input data.

This characteristic may lead to the so-called "hallucination" problem in generative AI, in which the system creates information that appears plausible but is actually false or nonexistent.

The team sought clues to address this problem from the biological brain.

Human brains form neural circuits through "spontaneous neural activity"-brain signals that occur on their own without external input-even before birth.

Applying this concept to artificial neural networks, the research team implemented a type of "preheating stage" by conducting pretraining with random noise before actual training began. This process allows the AI to adjust its own uncertainty before it formally starts learning.

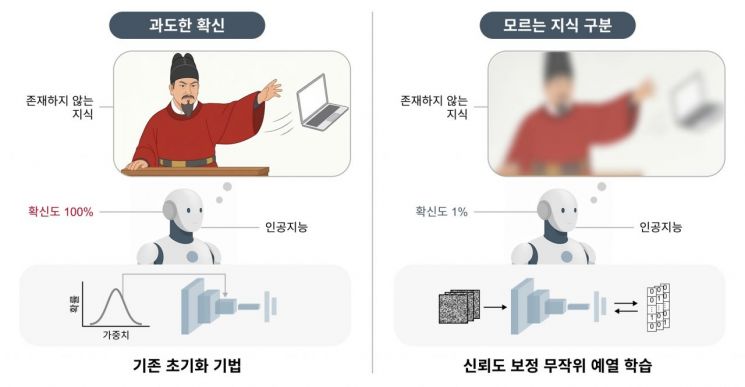

AI models that underwent this preheating process initially displayed lower confidence levels, but the overconfidence bias seen in conventional models was alleviated. The structure enables the AI to first learn the state of "I do not know anything yet" before it starts learning from data. According to the research team, this process naturally improved alignment between the model’s accuracy (the proportion of correct predictions) and its confidence (the degree to which the model believes it is correct).

The AI models that underwent preheating also responded differently when first exposed to data. Conventional models tended to show high confidence and provide incorrect answers even for data they had not learned. In contrast, models with pretraining using random noise demonstrated a clear improvement in their ability to lower their confidence and respond with "I do not know."

AI-generated image comparing cases where an artificial intelligence model applied confidence calibration through warm-up training and where it did not. KAIST

원본보기 아이콘This research offers the possibility for AI to develop "meta-cognition"-the ability not just to provide correct answers but also to distinguish between what it knows and does not know, by recognizing its own cognitive state.

Professor Baek stated, "This study demonstrates that by mimicking the process of brain development, AI can recognize its own knowledge state in a manner more similar to humans. Its significance lies in presenting a principle for AI to assess its own uncertainty, beyond simply improving accuracy."

He added, "The team's research findings can be applied to the initialization of all deep learning models, including those used in autonomous driving, medical AI, and generative AI-fields where high reliability is essential. Therefore, the technology could serve as a core method to enhance the overall trustworthiness of AI."

Meanwhile, Jeong Hwan Cheon, a master's degree holder in Brain and Cognitive Sciences at KAIST (currently serving as a private in the army), participated as the first author of the study. The research findings were published online on April 9 in the international AI journal "Nature Machine Intelligence."

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.