AI Imagines and Pre-Trains for Unfamiliar Tasks... UNIST Develops Meta-Reinforcement Learning Technique

Published 19 Aug.2025 10:04(KST)

Professor Seungyeol Han's Team Develops AI That Pre-Trains by Creating Its Own "Virtual Tasks"

Cheetah Robot Simulation Demonstrates Stable Running at Untrained Speeds

Selected for ICML 2025

For anyone who can walk and run, "brisk walking" is a piece of cake.

Even without learning how frequently to lift your feet or how to adjust your stride, you just know it by feel. In contrast, even if a physical AI robot has mastered walking or sprinting, when given a new task such as moderate running, it may fail to properly adjust its leg angles or force, resulting in awkward movements or even stopping altogether.

Lack of adaptability to untrained situations has long been cited as a limitation of physical AI technology. Now, a new AI meta-reinforcement learning technique has emerged to address this issue. This technology enables AI to imagine and preview new tasks on its own.

The team led by Professor Seungyeol Han at the Graduate School of Artificial Intelligence at UNIST has developed a technique called TAVT (Task-Aware Virtual Training), which trains artificial intelligence to adapt to new tasks it has never encountered before.

Research team, Professor Seungyeol Han (left) and researcher Jungmo Kim (first author). Provided by UNIST

원본보기 아이콘The learning technique developed by the research team allows AI to create its own "virtual tasks" and learn them in advance. It consists of a deep learning-based representation learning module and a generation module. The representation learning module quantifies the similarity (distance) between different tasks to understand their latent structure, and the generation module then combines these to create new virtual tasks. These generated virtual tasks are designed to preserve the characteristics of the original tasks, providing a preview effect for situations the AI has never trained for.

Jungmo Kim, the first author of the study, explained, "Traditional reinforcement learning is designed to learn the optimal policy for a single task, which leads to a sharp drop in performance in new situations. While there are meta-reinforcement learning techniques that expose AI to a variety of tasks, it is still difficult to adapt to out-of-distribution situations that fall outside the training range."

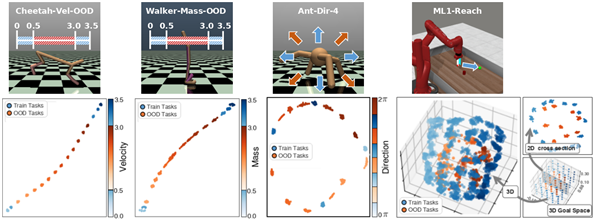

The research team applied this learning technique to various robot simulation environments, including cheetah, ant, and bipedal walking robots, and confirmed that adaptability to untrained tasks had improved.

In particular, in the Cheetah robot simulation (Cheetah-Vel-OOD) experiment, when the TAVT technique was applied, the robot was able to quickly identify target speeds and maintain stable movement even at intermediate speeds (such as 1.25 and 1.75 m/s) that it had never experienced before. In contrast, robots using existing meta-reinforcement learning techniques adapted more slowly or frequently fell over.

Professor Seungyeol Han stated, "This technique can enhance the task generalization performance of AI agents and can be widely applied to fields where flexible response to various situations is essential, such as physical AI robots, autonomous vehicles, and drones."

This research was accepted at the 2025 ICML (International Conference on Machine Learning), one of the world's top three AI conferences. The 2025 ICML was held in Vancouver, Canada, from July 13 to July 19.

The research was supported by the Ministry of Science and ICT and the Institute of Information & Communications Technology Planning & Evaluation (IITP) through projects such as the "Regional Intelligence Innovation Talent Development Project," "Core Source Technology Development for Human-Centered AI," "AI Graduate School Support (Ulsan National Institute of Science and Technology)," and "Development of Information Entropy-Based Exploration Techniques for Improving the Convergence of Continuous Space Reinforcement Learning."

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.