"AI Chatbot 'Iruda' Developer Fined 103.3 Million Won for 'KakaoTalk Conversation Theft'"

by Cho Seulkina

Published 28 Apr.2021 14:08(KST)

Updated 15 Mar.2023 19:45(KST)

[Asia Economy Reporter Seolgina Jo] The government has imposed a total fine and penalty of 103.3 million KRW on Scatter Lab, the developer that used 600,000 KakaoTalk conversations without user consent during the development of the AI chatbot 'Iruda.' This is the first case of sanctions against indiscriminate personal information processing by AI technology companies.

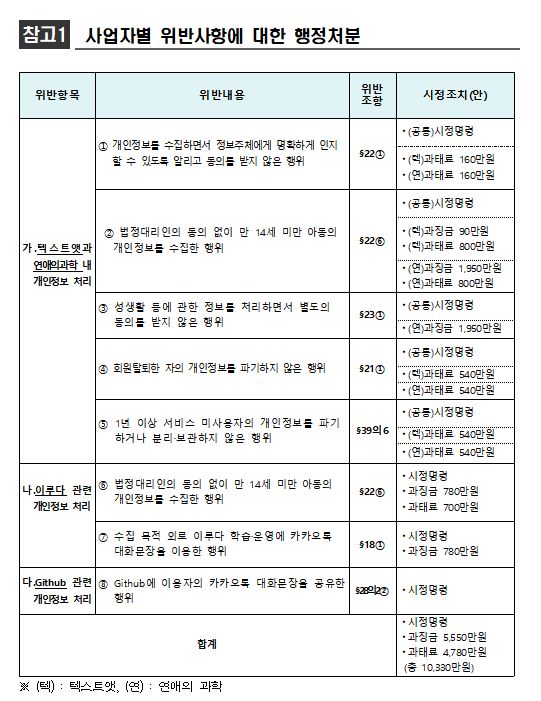

On the morning of the 28th, the Personal Information Protection Commission held its 7th plenary meeting and decided on this administrative disposition. This decision came about 100 days after the investigation began in mid-January following the Iruda incident. The Commission found Scatter Lab guilty of eight violations of the Personal Information Protection Act related to Iruda and imposed a fine of 55.5 million KRW and a penalty of 47.8 million KRW, totaling 103.3 million KRW, along with corrective orders.

According to the investigation, Scatter Lab used KakaoTalk conversations collected from its application services 'Textat' and 'Science of Love' from February 2020 to January 2021 for the AI development and operation of Iruda, a chatbot service targeting Facebook users.

Scatter Lab used approximately 9.4 billion KakaoTalk conversation sentences from about 600,000 users without taking any measures such as deleting or encrypting personal information like names, phone numbers, and addresses included in the conversations during the algorithm training process for developing the Iruda AI model.

Additionally, during the operation of the Iruda service, about 100 million KakaoTalk conversation sentences from women in their 20s were built into a response database, allowing Iruda to select and utter one of these sentences.

This corresponds to violations of the Personal Information Protection Act in the processing of personal information within Textat and Science of Love, including ▲collecting personal information of children under 14 without consent from legal guardians ▲processing information related to sexual life without separate consent ▲failing to destroy personal information of members who withdrew ▲failing to destroy or separately store personal information of users inactive for over a year. Additional violations such as failing to notify and obtain clear consent from data subjects were also confirmed during the investigation.

In particular, the Commission judged that Scatter Lab’s inclusion of 'new service development' in the privacy policies of Textat and Science of Love does not mean that users who simply logged in consented to the use of their data for new services like Iruda. The mere mention of 'new service development' does not allow users to anticipate that their KakaoTalk conversations would be used for Iruda’s development and operation, and it restricts users’ rights to control their personal information, potentially causing unpredictable harm. Therefore, Scatter Lab was found to have used personal information beyond the intended purpose of collection.

In the information processing related to Iruda, violations were also confirmed, including ▲collecting personal information of children under 14 without legal guardian consent ▲using KakaoTalk conversation sentences for Iruda’s learning and operation beyond the collection purpose.

Furthermore, the Commission found that Scatter Lab posted AI models on Github, a code-sharing and collaboration site for developers, from October 2019 to January 2021, along with 22 names (without surnames), 34 location information entries (district and neighborhood level), gender, and relationship information (friend or lover) included in 1,431 KakaoTalk conversation sentences. This was judged to violate Article 28-2, Paragraph 2 of the Personal Information Protection Act because pseudonymized information was provided to unspecified individuals, including 'information that can be used to identify specific individuals.'

During the administrative disposition process, it was confirmed that there was intense debate, with even experts divided over the Iruda incident. Considering the impact of the Iruda incident on the public and industry, the Commission gathered opinions from industry, legal and academic circles, and civic groups on the status of personal information processing and legal and technical issues in AI development and service provision, and held multiple committee discussions.

Yoon Jong-in, Chairperson of the Personal Information Protection Commission, evaluated, “This case is significant in clarifying that companies are not allowed to indiscriminately use information collected from a specific service for other services, and that data subjects must be clearly informed and give consent regarding personal information processing.”

He added, “I hope the disposition result will serve as a guide for AI technology companies on the proper direction for personal information processing and encourage companies to strengthen their own management and supervision.”

The Commission plans to release an 'AI Service Personal Information Protection Self-Checklist' that AI developers and operators can use on-site and provide field consulting to help AI technology companies enhance their personal information protection capabilities.

© The Asia Business Daily(www.asiae.co.kr). All rights reserved.